Posted on Feb 16, 2022

Cox, G.E., Palmeri, T.J., Logan, G.D., Smith, P.L., & Schall, J.D. (in press). Spiking, salience, and saccades: Using cognitive models to bridge the gap between “how” and “why”. In B. Forstmann & B.M. Turner (Eds.), An Introduction to Model-Based Cognitive Neuroscience (2nd Ed.), Springer Neuroscience.

Chow, J.K., Palmeri, T.J., Mack, M.L. (in press). Revealing a competitive dynamic in rapid categorization with object substitution masking. Attention, Perception, & Performance.

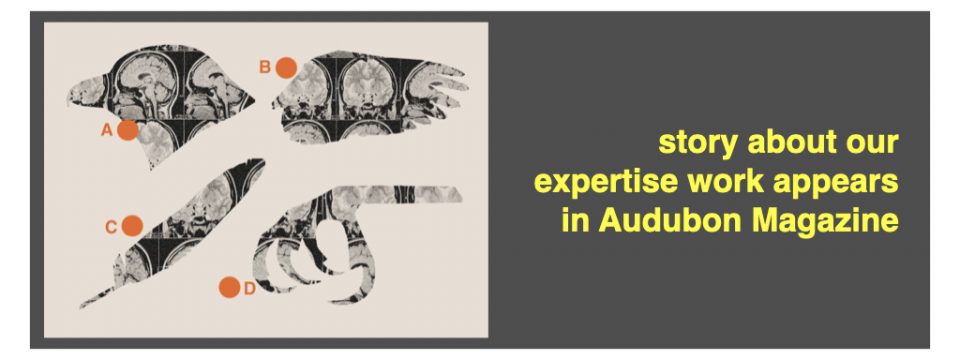

Carrigan, A.J., Charlton, A., Wiggins, M.W., Georgiou, A., Palmeri, T.J., & Curby, K.M. (in press). Cue utilisation reduces the impact of response bias in histopathology. Applied Ergonomics.

Chow, J.K., Palmeri, T.J., Gauthier, I. (in press). Haptic object recognition based on shape relates to visual object recognition ability. Psychological Research.

Chow, J.K., Palmeri, T.J., Gauthier, I. (in press). Visual object recognition ability is not related to experience with visual arts. Journal of Vision.

Carrigan, A.J., Charlton, A., Foucar, E., Wiggins, M., Georgiou, A., Palmeri, T.J., & Curby, K. (in press). The role of cue based strategies in skilled diagnosis amongst pathologists. Human Factors.

Read More